BDMaaS+

Business-Driven Management as a Service Plus (BDMaaS+) is a new that significantly evolves our previous BDMaaS proposal by showing several new elements of technical novelty.

- It supports the placement of realistic multi-tier services consisting of complex workflows made by multiple application components of different types (e.g., Web Server, App Server, Relational Databases, Transaction Servers and Queue Managers). Such a placement is based on real network measurements and monetary costs for a large-scale Cloud computing environment, implemented on top of 6 different Amazon EC2 data centers and 2 private clouds.

- It leverages our experience in both service/system modeling and inter-data center network delay modeling to feed our novel simulator with realistic characterizations, to be accounted for by the dynamic re-adaptation of component deployment at runtime.

- It adopts a simulative approach to reenact IT services under different configurations to accurately capture peculiar behavior of real-life IT services, and it adopts an innovative optimization solution based on a memetic algorithm to enable robust and resilient exploration of the large and challenging search space, thus realizing an effective what-if scenario analysis tool.

- Fourth, BDMaaS+ has been implemented and used to collect a wide set of experimental results that show the benefits and original aspects of our proposal demonstrating the effectiveness of our solution.

The main goal of the BDMaaS+ framework is the management of service placement in hybrid cloud environments by minimizing the business impact of the deployment with respect to all service provider operational and non-operational business criteria, especially considering realistic inter-datacenter cloud scenarios.

For the sake of simplicity and generalization, BDMaaS+ currently focuses on Web Services (WSs) as the basic building blocks for the realization of complex IT services as workflows of WSs composed according to the WS-BPEL standard. In other words, BDMaaS+ conceptually operates at the Platform-as-a-Service (PaaS) level with the main goal of finding the best placement configuration of the WSs in the distributed Cloud environment.

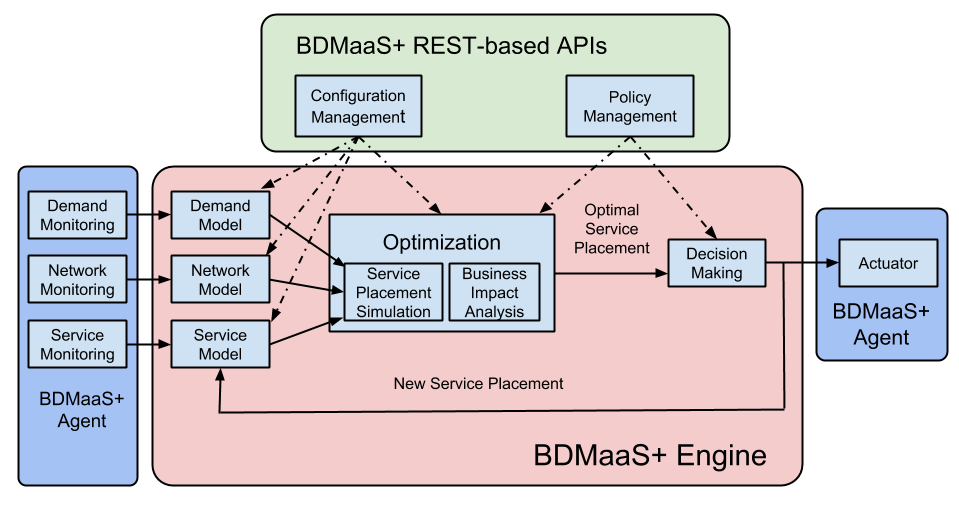

We organized BDMaaS+ in several subcomponents addressing each needed management aspect. The API component, namely, BDMaaS+ REST-based APIs, allows service providers interact with the BDMaaS+ engine and includes two components, namely, Configuration Management and Policy Management. These components allow service providers to enter a configuration of the Cloud computing environment (e.g., number of data centers, service model, etc.) and to select the optimization policies to apply (e.g., business objectives, parameters for the optimization algorithm, etc.).

Focusing on the BDMaaS+ engine, it consists of three main stages that work in a pipeline, namely, modeling, optimization, and decision making. At the modeling stage, Demand Model, Network Model, and Service Model are the three main components. They provide, respectively, the functions for: i) building the models of the service user service request arrival process (e.g., customers’ locations, distributions of service request inter-arrival times, etc.); ii) gathering and processing network measurements collected on-the-field by local measurement agents deployed at all private/public cloud datacenters to draw realistic network models; iii) and emulating IT service execution and deployment (e.g., service time distribution, current service component placement, etc.). These three modules are fed by their respective monitoring agent twins on the leftmost part of the figure that integrate with existing cloud platforms to gather monitoring information about the infrastructure (virtual resources) and applications component levels by updating the parameters of the service execution model.

The optimization stage consists of the Optimization macro-component and represents the core part of BDMaaS+. It is in charge of reenacting the Cloud computing IT service and of evaluating possible alternative service placement configurations over the hybrid cloud environment. First, the Service Placement Simulation component mimics possible service placements among those generated by the modeling stage. Then, the Business Impact Analysis component assigns an overall cost (namely, business impact) to each of these possible configurations.

At the third stage, the Decision Making component selects the best IT service placement configuration, namely, the one minimizing the business impact, according to the user preferences, current network conditions, and the output data provided by the Optimization component. Finally, BDMaaS+ was designed to be easily integrated with existing Cloud-based IT services through lightweight BDMaaS agents in-stalled at each data center. Each agent includes three relatively simple and implementation-specific “connector” components: Demand Monitoring, Service Monitoring, and Actuator, depicted in green in Fig. 1. Finally, the Actuator component is capable of automatically putting the new service configuration in place as required by the Decision Making component.

SISFC is a discrete-event simulator designed to reenact the behaviour of IT services in federated Cloud environments.

Implementation Details

SISFC is implemented in Ruby.

Getting SISFC

The SISFC source code is available on GitHub.

To illustrate how BDMaaS+ works, let us consider a realistic case study capturing the behavior of an enterprise-class IT service deployed on a large scale for customers with global presence, and set to its optimization. More specifically, let us try to optimize with BDMaaS+ the architecture of a Money Management and Transfer System (MMTS) IT service, that allows users to manage their bank accounts and to submit money transfer request.

The MMTS IT service represents an interesting case study which raises non-trivial challenges from the optimal software component placement perspective. In fact this type of IT services often leverage legacy software components that cannot be easily migrated and other software components that implement sensitive functions and whose deployment thus has to withstand security constraints.

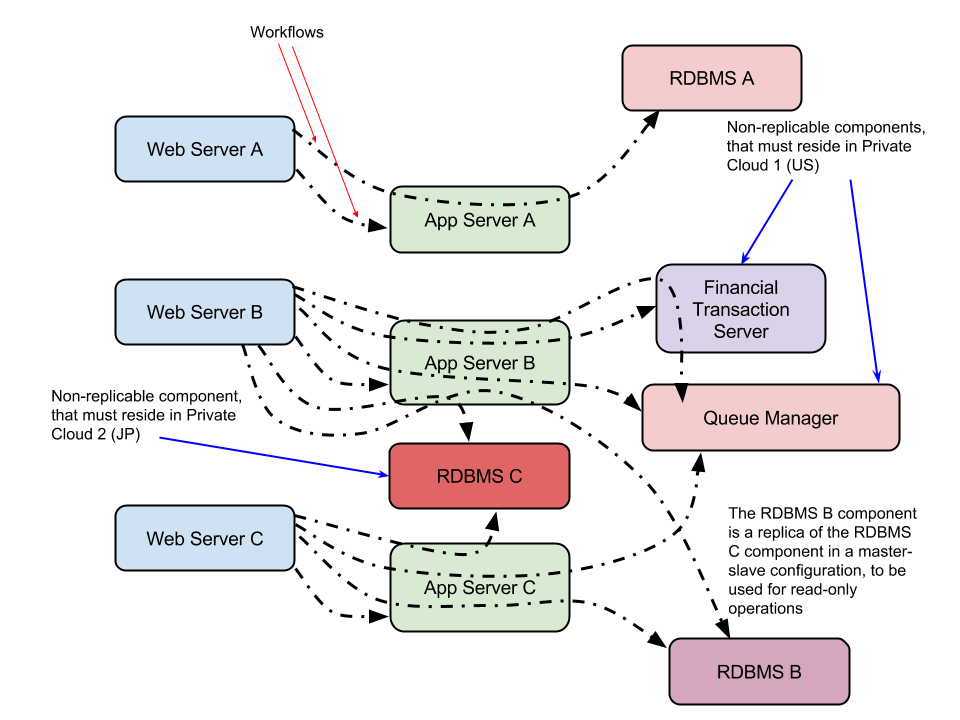

MMTS IT service is composed by 3 applications, which we label A, B, and C. A is an ASP.NET application running on Microsoft Windows, B is a Web application based on the LAR (Linux, Apache, Ruby on Rails) stack, and C is a LEMP (Linux, Nginx, MySQL, PHP) application.

The architecture of the applications, depicted in Fig. 1, mostly follows the classic 3-tier paradigm, with a Web Server, an Application Server and a DataBase Management System. However, the 3-tier paradigm is extended by some components such as a Financial Transaction System and a Queue Manager that represents the interface to a reporting system based, e.g., on PDF report generation and submission through an E-mail Server. (The reporting function represents an external support system for MMTS, and as a result we do not consider it in the software component placement optimization.) In addition, applications B and C share the same replicated DataBase: a MySQL DBMS configured with master/slave replication.

For the IT service deployment, we consider a federation of 7 different Cloud data centers, as depicted in Fig. 2. More specifically, we consider 5 public Cloud data centers representing Amazon EC2’s US East, US West, EU, Brazil, and Asia Pacific (Singapore) data centers, and 2 private Cloud data centers, respectively located in North America and in the Asia/Pacific region.

Fig. 2.

We also consider a few deployment constraints. The MySQL master component, identified as RDBMS C in Fig. 2, must reside in Private Cloud 1 (US) for security reasons and cannot be migrated to any other data center. The Financial Transaction System component is implemented by a legacy system, residing in private Cloud data center 2, which cannot be migrated to any other data center. All the other software components can be moved around easily.

We consider a customer with global presence, with one division in each of the 5 following locations: East Coast USA, West Coast USA, South America, Asia, Europe. The divisions are of varying sizes and account for a different share of requests: 10%, 15%, 25%, 30% and 10% respectively. We assume that the aggregated flow of request has a constant intensity – and whose interarrival times can be modeled with a Pareto distribution with location 1.2E-4 and shape 5, corresponding to 6,666.66 requests per second. The requests emanating from each division will be automatically forwarded to the closest Cloud data center. (This is a common practice, as for instance Amazon allows to do with its Route 53 system.)

In order to model the communication latencies between the different locations, we assume that the times to transfer the content of a request or response message from a location to the other are modeled according to the Gaussian mixture model we developed in previous work. We assume that the latencies for message transfers within a single location are significantly smaller and can thus be safely ignored.

We consider 10 different workflows for the MMTS IT service, as depicted by Fig. 3 and Table I. For each (workflow, customer location) couple, we set an SLO objective defined as a step function of the average response time metric and a corresponding violation penalty.

The results we obtained from applying BDMaaS+ to the case study described above are presented in Fig. 3. We configured BDMaaS+ to reenact the MMTS IT service in different configurations for 60 seconds of simulated time, plus 20 seconds of simulation warmup time, roughly corresponding to the processing of 100,000 service requests, and evaluate the performance of each configuration.

Given the problem size and complexity, we configured the QPSO algorithm to use 40 particles and a contraction-expansion coefficient alpha = 0.75 and adopted a 10-sample random search for the simplified VM allocation algorithm.

As it can be seen, in only 11 iterations of the QPSO algorithm, each one corresponding to the evaluation of 400 different IT service configurations, BDMaaS+ is capable of finding the optimal configuration for the system, reducing the total daily costs down to 28,252.81 $.

The BDMaaS+ source code is available on GitHub at the DSG-UniFE/bdmaas-plus-core repository.